As I wrote in my previous post, I’m building my own VMware vSphere Hypervisor (a.k.a. ESXi) host. Today I was able to get the first Debian server running on it! Let me go through the platform install for us.

Planning the Hardware

I was totally not planning to get a rackmount server or even a tower running at home. My hope was to get a small, cheap and silent desktop PC that could run the vSphere Hypervisor with only minor hardware tweaks. I was aware of the limitations in the hardware support in the Hypervisor but I could not find all the definitive answers in the VMware Compatibility Guide (www.vmware.com). Briefly, these were part of my hardware assessment:

- CPU: I wanted at least two cores, preferably with Hyper-Threading.

- RAM: I don’t plan to run production-grade Windows servers on the platform so about 4 GB in the start is ok but at least 8 GB has to be supported. The free Hypervisor 5.1 supports up to 32 GB.

- Network: Intel and Broadcom NICs are apparently supported well in Hypervisor 5.1. One NIC is fine for now but possibility to add at least one would be good.

- Disk: I don’t have NFS or iSCSI NAS and don’t need RAID, so I just hoped that the onboard SATA with some 500 GB of disk would be supported.

I found a Lenovo ThinkCentre Edge 72 SFF (shop.lenovo.com) in my local dealer’s webshop (www.data-systems.fi) and it looked good with very reasonable price in December. The model is 3493-EQG (according to the box) and it has:

- Small form factor, meaning only one 3.5″ HDD (500 GB) and a DVD writer

- Intel Core i3-2120 (Sandy Bridge, 3.3 GHz, two cores plus Hyper-Threading), Intel H61 chipset

- Two DIMM slots, one 4 GB module attached, supports max 16 GB RAM

- One low profile PCIe x16 slot

- Two low profile PCIe x1 slots

- Serial and parallel ports (I didn’t know these still existed! Now I can access the console of my SRX100 with a live CD if sh*t hits the fan!)

- and it happens to be silent as a whisper.

I knew that the onboard NIC was based on Realtek chip and that it was not found in the VMware compatibility guide so I also bought an Intel Gigabit CT Desktop Adapter (www.intel.com) with the system. It came with both a normal and a low profile bracket.

Starting the System

The system had Windows 7 Professional 64-bit preinstalled (no removable media was included) so I thought I could save it if I needed it later for some reason. First I used Clonezilla Live CD (clonezilla.org) to make an image to my LaCie NAS with Samba (about 25 GB) and then I also started Windows and used the supplied ThinkVantage tools to create the boot and rescue DVDs (4) from the system. (Belt and suspenders, I guess.)

I mounted the low profile bracket on the Intel NIC and installed it in one of the two PCIe x1 slots.

Then it was the moment of truth: I plugged in the Hypervisor USB stick I had previously created, and started the system. It booted automatically from the USB stick before the harddisk.

ESXi was started with no complaints as far as I know!

I logged in on the console (using the root password I set while previously creating the USB stick) and configured the IP address settings for the management network. At the moment I only have the Intel NIC without any VLAN trunks. And, as expected, there was no sign of the Realtek NIC so the onboard NIC is apparently unusable in this system.

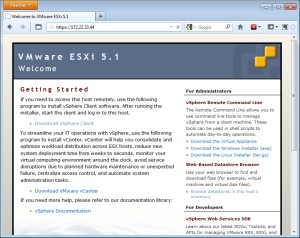

It appeared that there was nothing much to do on the console so I started the browser on my laptop and went to the IP address of the host.

Nothing to really do there either except to download the vSphere Client, which I obviously did on my laptop. With the Client I was able to login to the host (again, using the same root credentials).

Nothing to really do there either except to download the vSphere Client, which I obviously did on my laptop. With the Client I was able to login to the host (again, using the same root credentials).

In the Client I browsed around for a while as I had never seen that interface before. These are the steps I finally made in the Configuration tab to get things started:

- Licensed Features: I visited the My VMware (my.vmware.com) portal to get the free never-expiring license key and entered it here (otherwise there is a 60 days evaluation period)

- Time Configuration: I configured my ISP’s NTP server addresses

- Virtual Machine Startup/Shutdown: I enabled it to get the VMs running again if the system has an unexpected power loss

- Storage – Devices view: Ha! It detected my SATA disk just fine! I went to the Devices view and renamed the disk to a more convenient “Local ATA Disk” (it had a long name consisting of lots of underscores and serial numbers etc).

- Storage – Datastores view: I used the Add Storage… link to allocate the whole 500 GB disk (465something GB as it was) as one datastore and gave it a name “WD500G”.

A couple of words about the datastores: The concept works so that you allocate your disks/LUNs/NFS disks or whatever as datastores in vSphere. One datastore is like one partition if you are using local disks. If you want you can create several datastores on one disk. I didn’t see any need for that so I just allocated the whole disk as one big datastore. The datastores are automatically formatted in VMFS.

The existing partitions (the factory-installed Windows 7 NTFS partitions) were automatically deleted from the disk. I didn’t find any way in the vSphere Client to explicitly delete the NTFS partitions, it just happened in the datastore addition process. If there had been some unpartitioned space on the disk maybe it would have been possible to allocate it for datastore and leave other partitions alone. I don’t know as I didn’t have that situation.

I had previously read an article about the scratch partition in systems started from USB: http://kb.vmware.com/kb/1033696. Basically it is about the situation that system logs and other files are saved on a volatile ramdisk (because /tmp/scratch is there) and that is not good for practical reasons (like when your system powers off the logs are all lost).

So, I went to the Datastore Browser (by right-clicking on the datastore) and created a scratch folder in the datastore, and then configured the Advanced Settings as described in the article (ScratchConfig.ConfiguredScratchLocation = /vmfs/volumes/WD500G/scratch; the path is automatically expanded to a more precise technical format).

In this screenshot there are also two other folders in the datastore:

- Debian1: It was created automatically when I created a virtual machine called Debian1 on this datastore. It seems to contain all of the settings and virtual disks of the virtual machine.

- ISO CDs-DVDs: I created this folder and used the Upload button in the toolbar to transfer some DVD images into the host. Later I can use the images to install operating systems for the virtual machines. I don’t want to play with physical DVDs unless I really have to.

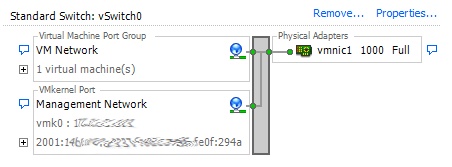

Networking

The networking settings look like this at the moment:

As shown I only have one physical NIC detected and it seems to be called vmnic1. My VMs and the host management connections are using the same network for connectivity.

The management connection with vSphere Client seems to work fine with IPv6 as well.

Let’s Call This a Success

I can only come to one main conclusion: The host implementation is successful!

I haven’t really test-driven the platform yet since one small 256 MB Debian VM does not cause any kind of load by itself but it is some start anyway.

Other steps to possibly follow later:

- Add at least one NIC or maybe even dual/quad port NIC to get more possibilities for network implementations. Send me your extra Intel NICs.

- Add more RAM. Just because I can.

- Create a Linux VM for my own SSL CA and then deploy the certificates for all my internal SSL purposes like the Hypervisor management access. Not really related to virtualization as such but one action point anyway.

Installing ESXi patches:

esxcli software vib install --depot=/vmfs/volumes/WD500G/ESXi\ updates/ESXi510-201212001.zipHi.

Just one question if you do not mind. You are using vSphere Hypervisor that is installed on USB stick to configure VMs on the same PC where this USB is plugged in? No other hardware as a host, connection to other, remote hosts, etc.?

If so then this is simply great news. Are you satisfied with performance?

Hi! You are correct, the USB stick is plugged into the same server I’m running the VMs on. The internal 500GB disk is all used for the VMs and other purposes like storing the CD and DVD images I use.

Absolutely satisfied with the performance. The experience of course depends on the expectations and VM usage. I currently have 12 GB of RAM in the system so I won’t run out of RAM with my VMs. Obviously I can still run out of processing power, disk I/O or network capacity in my installation but at the moment that’s purely theoretical possibility.

Thanks for such a fast reply.

Indeed, this is fantastic news. I’ve been searching for past 2 days for this kind of a solution. I am fairly new to virtualization and reluctant to concept of VMWare Workstation (OS, vmware, OS, OS…). Basically, I was hoping such a configuration would work for my android build environment on linux, web development on windows and common, day-to-day simple tasks. I guess I’ll simply have to try it now 🙂

For what I’ve been able to gather, the biggest possible issue might be multimedia (e.g. sound and video) but in the worst case I can resort to vmware workstation on similar technology. Let’s see how it goes…

Thank you for valuable information. 😉

Can someone help me build a VM at home?

I installed ESXI on a box that has no operating system? what do i do next?

How to check ESXi version:

~ # vmware -vl

VMware ESXi 5.1.0 build-1483097

VMware ESXi 5.1.0 Update 2

Hi,

Just a quick question, how you are able to upload iso images from outside world to your ISO CDs-DVDs storage folder ? Can you please explain in terms of networking point of view

Thank you

Taslim

Hi Taslim, uploading files is really easy with the vSphere Client. Basically you use the Datastore Browser functionality to get to the datastore that your ESXi host knows about, and then you use the buttons in the Datastore Browser to save files to a folder in the datastore.

From networking point of view the vSphere Client is using 443/tcp to connect to the ESXi host, and it may use some other ports as well to send the files, there are good KB articles in VMware. Plain and simple TCP sessions, originated from the client side, nothing strange like FTP.

Oh, and how the data goes from the ESXi host to the datastore, it depends on the way the datastore is connected to the host. In my case I currently have both local SATA storage and also NFS storage. When uploading file to the NFS-based datastore the NFS connection is happening between the ESXi host and the NFS storage. So vSphere Client only communicates with the ESXi host (in this case where we have no vCenter), and ESXi host then handles the datastore communication as appropriate.